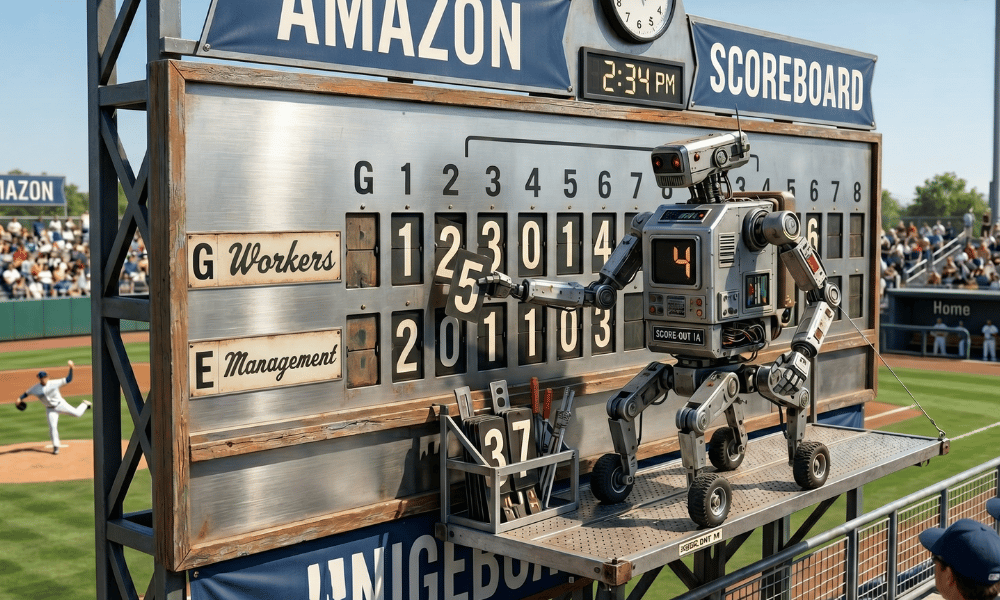

Inside Amazon's "tokenmaxxing" scandal lies a textbook warning about what happens when organisations measure AI adoption rather than AI value

Amazon employees are using an internal AI tool to run unnecessary, low-value tasks — not because the work needs doing, but because the activity inflates their scores on a company leaderboard tracking artificial intelligence usage.

The practice, which workers have taken to calling "tokenmaxxing," was reported yesterday by the Financial Times and has since spread as a case study across the technology industry. It exposes, in unusually vivid terms, what happens when a productivity metric becomes a target: the metric stops measuring productivity, and starts measuring the human capacity for gaming.

READ MORE: Is your AI strategy driving employees away?

The episode is not merely a Silicon Valley curiosity. For HR leaders who are currently designing, implementing or being asked to justify AI adoption programmes — which is to say, most of them — it is a live demonstration of a failure mode that is easy to build and hard to reverse.

What Amazon Built, and What Went Wrong

Amazon had been widely deploying MeshClaw, an in-house agentic AI product that allows employees to create software agents capable of connecting to workplace tools and completing tasks on a user's behalf. The bot can initiate code deployments, triage emails and interact with applications including Slack, according to the Financial Times. An internal memo described it in terms that will be familiar to anyone who has sat through an AI all-hands briefing: it "dreams overnight to consolidate what it learned, monitors your deployments while you're in meetings and triages your email before you wake up."

More than three dozen Amazon engineers worked on the tool. The company positioned it as empowering "thousands of Amazonians to automate repetitive tasks each day."

But Amazon had also introduced targets requiring more than 80 per cent of its developer workforce to use AI tools each week, and had begun tracking token consumption — the units of data processed by AI models, essentially a meter of how much the tools are being run — on internal leaderboards. Team-wide statistics were initially visible to all staff before being restricted so only employees and their managers could view them.

The result was predictable to anyone who has studied organisational behaviour. "There is just so much pressure to use these tools," one Amazon employee told the Financial Times. "Some people are just using MeshClaw to maximise their token usage." Another said the data was being watched regardless of official policy: "Managers are looking at it. When they track usage it creates perverse incentives and some people are very competitive about it."

Amazon told staff that token statistics would not be used in performance evaluations. Workers did not believe it.

A Pattern Across Silicon Valley

Amazon is not alone. Meta employees engaged in similar tokenmaxxing behaviour, competing on an internal leaderboard called "Claudeonomics" that ranked the company's roughly 85,000 workers by token consumption. In a 30-day window, total usage on the dashboard exceeded 60 trillion tokens. The leaderboard was taken down after reporting by The Information, but Meta's CTO Andrew Bosworth publicly endorsed the underlying logic — pointing to his best engineer spending the equivalent of their salary in AI tokens as evidence of a productivity multiplier.

At Microsoft, a senior leader sent an internal memo stating AI use was "no longer optional, it's core to every role and every level." A company spokesperson later clarified there was "no formal review of an employee's AI usage" — the kind of clarification issued when an original message lands harder than intended.

A May 2026 CNBC report noted that "almost every Fortune 500 is tracking overall AI usage," with tokens, prompt counts, licence activations and seat-utilisation rates becoming standard surveillance inputs alongside older metrics like badge-swipe and keyboard activity.

The financial stakes behind all this pressure are enormous. Combined 2026 capital expenditure from Amazon, Microsoft, Alphabet and Meta is tracking between $650 billion and $700 billion. Every executive who has signed off on those commitments has an investor relations problem if adoption numbers look weak. Token counts are the answer — unless employees are manufacturing the counts themselves.

The HR Problem at the Centre of This

The Amazon story is being described by analysts as a textbook case of Goodhart's Law: the principle that when a measure becomes a target, it ceases to be a good measure. The moment token consumption was tied to leaderboards that managers could see, it stopped measuring AI productivity and started measuring competitive anxiety.

HR leaders designed this. Not maliciously — but the incentive structure that produced tokenmaxxing is a people management structure, not a technology one. Weekly usage targets, visible leaderboards, ambiguous signals about whether the numbers feed into performance reviews: these are HR design choices, and they have produced a predictable human response.

As HRD Canada has reported, only four per cent of employers see employee resistance as a barrier to AI adoption — yet nearly a quarter of workers say they would consider leaving a job if forced to use AI tools in ways they did not support. The gap between those two numbers describes the same dynamic playing out at Amazon: employees complying visibly and resisting quietly. Tokenmaxxing is simply a more industrious version of that quiet resistance.

Canadian workers are not immune. An Angus Reid and Growclass report covered by HRD found that while 84 per cent of employees are enthusiastic about AI, 56 per cent worry about job security when working alongside AI agents, and 54 per cent feel they are falling behind peers in adoption — a figure that jumps to 61 per cent among non-managers. More than four in ten Canadian workers admitted feeling pressure to adopt AI technologies in their role. In that climate, a leaderboard tracking how much AI you run each week is not an adoption nudge. It is a source of anxiety that produces exactly the gaming Amazon is now dealing with.

The Canadian Legal Dimension

There is a specific legal dimension that Canadian HR leaders need to take seriously.

As HRD has reported on the emerging Canadian regulatory landscape, Bill C-27 — the proposed Artificial Intelligence and Data Act — will classify employment-related AI uses as high-impact, requiring accountability, transparency and meaningful human oversight. David Krebs of Miller Thomson has been explicit: if you are using AI data in a tool that then gives outputs affecting employment, "that will be considered high impact. And with high impact, there's accountability, there's transparency, there has to be good human oversight." An AI leaderboard that managers can see and informally use to assess performance is precisely the kind of use case AIDA is designed to regulate.

Ontario is already moving. As HRD has reported, employers with 25 or more employees in the province have been required since January 2026 to disclose when AI is used to screen, select or assess job applicants. Quebec's Law 25 requires transparency around automated decision-making. The trajectory across Canada is consistently toward greater disclosure, not less.

READ MORE: Ontario's AI wake-up call

That trajectory makes the ambiguity at the heart of Amazon's situation — telling employees their token data would not feed into reviews while managers informally tracked the leaderboard — exactly the kind of practice that creates legal exposure. If an AI metric is being used to assess performance, employees have a right to know. The fact that Amazon told workers otherwise and workers didn't believe it is a governance failure with regulatory implications as that legislation matures.

HRD has also reported on Ontario's Auditor General's scathing assessment of AI governance in the Ontario Public Service, released just today. Of approximately 55,000 OPS staff, only 1,800 — three per cent — had completed responsible AI training. Between April and August 2025, roughly 12,000 OPS employees accessed some 400 AI-related websites on government devices, with 60 per cent of those sites rated unsafe. The report is a stark reminder that ungoverned AI adoption — whether it looks like tokenmaxxing, shadow AI, or simply staff left to figure it out alone — is not a Silicon Valley problem. It is a Canadian workplace problem happening right now.

The Measurement Problem Is Also a Security Problem

Multiple Amazon employees told the Financial Times they were alarmed by the security profile of MeshClaw itself. The tool was granted permission to act on a user's behalf — initiating code deployments, interacting with internal systems, sending communications. One employee said: "The default security posture terrifies me. I'm not about to let it go off and just do its own thing."

This concern sits alongside the gaming problem rather than beneath it. An AI agent that employees are running on unnecessary tasks to inflate usage scores is an agent taking real actions in real systems — creating code deployments that did not need to happen, sending emails that did not need to be sent. The perverse incentive structure does not just produce misleading productivity data; it produces real operational noise.

HRD has reported that only 25 per cent of Canadian organisations have fully operational AI governance programmes, even as 82 per cent report moderate to extensive AI use. Tokenmaxxing is a corporate-sanctioned version of exactly the kind of ungoverned AI activity that governance programmes are designed to prevent — except that the governance failure here was built into the incentive structure by management.

Canadian research covered by HRD found that for every 10 hours of efficiency gained through AI, nearly four are lost correcting, clarifying or rewriting AI-generated content. Add leaderboard pressure that rewards running AI more rather than running it better, and that rework toll compounds further. Only 14 per cent of employees consistently achieve net-positive outcomes from AI use. The question tokenmaxxing raises is how much of the remaining 86 per cent of AI activity is similarly unproductive — but never flagged, because the leaderboard only measures volume.

What Canadian HR Leaders Should Do Now

The Amazon episode arrives at a moment when nearly 73 per cent of executives globally say their AI investments have already fallen short of expectations, and 70 per cent are prepared to cut AI budgets if 2026 goals are not met. The pressure flowing from those expectations into HR teams — through KPIs, adoption targets and the implicit understanding that usage statistics will be scrutinised — is real. But the way it is currently being transmitted into the workforce is producing the opposite of what it intends.

READ MORE: Is your AI not as efficient as you thought?

Several practical observations for people professionals:

Measuring usage is not measuring value. Token counts, weekly active users and seat-utilisation rates tell you whether employees are running the tools. They tell you nothing about whether the tools are producing better work. Research cited by HRD found that a quarter of Canadian knowledge workers report saving no time with AI at all, and another 44 per cent save less than four hours a week — well below what most organisations need to justify the cost and disruption of large-scale adoption. A token leaderboard does nothing to change that. It may make it worse.

Leaderboards drive performance theatre, not performance. The mechanism at Amazon is structurally identical to the Wells Fargo fake accounts scandal — aggressive targets tied to evaluation producing the appearance of success regardless of whether anything of value was delivered. As one HRD report noted, training without governance creates chaos, and governance without training creates bureaucracy. A leaderboard is neither.

The ambiguity about whether metrics feed into reviews is the problem, not the solution. Amazon told employees that token statistics would not inform performance evaluations. Employees did not believe it, and behaved accordingly. Research covered by HRD finds that employees act on what they believe management is watching, not on what policy documents say. If there is any possibility that an AI metric feeds into decisions about someone's career, they will optimise for it.

Transparency is the variable that changes behaviour. HRD has reported that where organisations clearly communicate their AI strategy, 92 per cent of workers report productivity gains — a 30-point increase over those without such communication. The organisations performing best on AI adoption are those in which employees understand why the technology is being deployed and what it is expected to produce. Sonja Nelsen, VP of HR at Peak Group of Companies, put it plainly to HRD: "We need to have a really proactive approach and not wait to bring in all this new technology" — the communication has to come first, before the targets.

Regulators are moving. The direction of travel in Canadian law — Bill C-27's AIDA, Ontario's Working for Workers Act AI disclosure requirements, Quebec's Law 25 — is consistently toward greater accountability for how AI is used to assess and manage workers. The legal minefield, as HRD has previously reported, includes the risk of constructive dismissal claims if AI-driven changes materially alter employees' duties without proper process. Organisations building tokenmaxxing-style incentive structures now are creating the kind of paper trail that will be uncomfortable when those obligations crystallise.

Amazon spent $200 billion this year to make AI central to how its employees work. The tokenmaxxing problem did not cost a dollar to build. It came free, with the leaderboard.