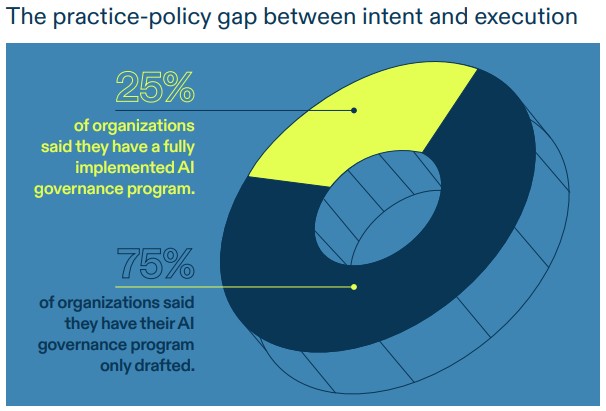

'Turning those policies into daily practice remains a work in progress'

Organisations are rapidly integrating artificial intelligence (AI) into core business functions, but governance practices have not kept pace, according to a report.

Overall, 82% of companies report moderate to extensive AI use.

However, only 25% have implemented fully operational AI governance programmes, finds a survey of over 400 GRC and audit professionals across the United States, Canada, Germany, and the United Kingdom.

“Organisations understand the risks; they’ve written them into their policies. But turning those policies into daily practice remains a work in progress,” the report from AuditBoard states.

Only 7% of Canadian tech leaders consider their organizations to be advanced in AI implementation, a significantly lower figure than the 17% reported globally, according to a previous report.

What's stopping companies from effective AI governance?

The AuditBoard.com report also raises concerns over the increasing use of “shadow AI” – employee-initiated tools adopted outside of formal procurement and IT channels.

While 90% of respondents expressed confidence in their ability to monitor such tools, only 67% conduct formal risk assessments of third-party AI systems, finds the survey of 412 respondents sourced from a leading global online panel provider.

“Overconfidence, in this context, becomes a risk in itself,” AuditBoard warns.

Without structured oversight, shadow AI tools may introduce vulnerabilities, including privacy breaches and compliance violations.

AuditBoard notes that organisations are often prioritising advanced automation—such as risk evaluations and third-party assessments—before establishing basic governance foundations like model inventories and access approvals. This creates what the report describes as a “governance illusion,” where the appearance of control masks underlying weaknesses.

"This report validates the critical need for a more integrated, operational approach to AI risk," says Michael Rasmussen, CEO of GRC Report.

Here’s why AI adoption is failing inside your organization, according to an expert.

Recommendations

AuditBoard shares the following recommendations for companies:

- Translate policy into practice – Define how policies apply to real-world scenarios: which teams review AI use cases, how model performance is monitored, and what happens when issues arise. Embed governance into daily decisions, not just documents.

- Build and empower cross-functional teams – AI governance isn’t owned by one function. Risk, compliance, product, legal, security, and engineering all need a seat at the table. Establish cross-functional councils with clearly defined responsibilities and decision-making authority to ensure consistent execution.

- Automate strategically, not prematurely – Focus first on core controls like AI inventories, access approvals, and documentation standards. Scaling without structure risks embedding gaps rather than closing them.

- Train and communicate continuously – Roll out training tailored by function and seniority. Communicate policies clearly and often, with internal reporting and visible expectations to build a culture of responsible AI use.

- Stay agile and adaptive – Shift from annual reviews to continuous updates, with teams structured to respond to new tools, risks, and regulatory changes as they emerge.

“Governance must become part of daily operations, baked into how AI is evaluated, approved, and monitored at every stage of its lifecycle,” AuditBoard states.

Business leaders around the world continue to diverge in their views on the adoption of generative AI in the workplace, according to a previous NTT DATA report.