A provincial audit exposes dangerous gaps in AI governance — and the lessons apply to HR leaders in any sector

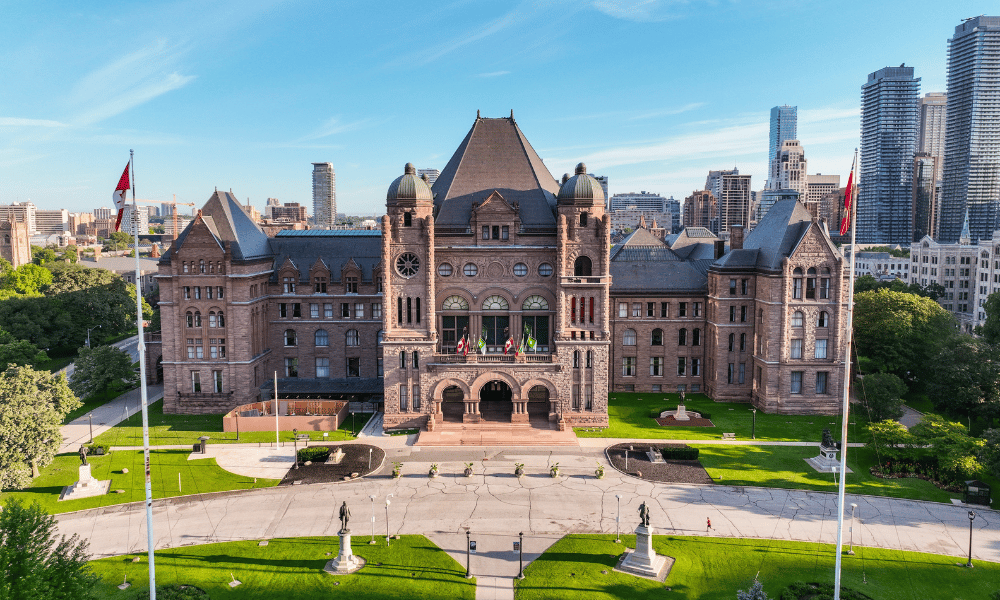

When Ontario's Auditor General Shelley Spence released a special report on the province's use of artificial intelligence on May 12, the findings landed like a warning shot. Thousands of civil servants had been routinely turning to unsanctioned AI tools on government-issued devices, uploading sensitive data to platforms with little to no security oversight — and almost nobody had been trained to know any better.

For HR leaders watching from the sidelines, the report offers what may be an uncomfortably familiar reflection.

The audit, conducted by the Office of the Auditor General of Ontario and covering the Ontario Public Service (OPS) from January to November 2025, examined how AI systems were being procured, deployed, and used across one of Canada's largest public-sector workforces, the office said in a news release. What it found was an organization racing to adopt AI while its governance, training and risk controls lagged dangerously behind.

The training gap at the heart of the problem

Of the OPS's approximately 55,000 staff, just 1,800 — or three per cent — had completed the Ministry's Responsible Use of AI training course as of August 2025, according to the report. That training, launched in January 2024, covers everything from safe use of AI websites to the risks of uploading sensitive data to unsecured platforms. It is not mandatory.

The consequences of that gap were stark. Between April and August 2025, roughly 12,000 OPS employees accessed approximately 400 AI-related websites on government devices. Around 60 per cent of those sites — 244 of them — were rated unsafe or unsecured by Microsoft's Defender cybersecurity tool, with security scores of five or lower out of ten. Fifteen per cent of those low-scoring sites also featured content unrelated to work.

Meanwhile, Microsoft Copilot Chat — the one OPS-approved generative AI tool, operating within a secure, data-protected environment and which is covered in the AI training course — accounted for just six per cent of staff's overall AI usage. Unsanctioned alternatives made up the remaining 94 per cent.

This is a pattern that HR professionals across Canadian organizations will recognize. Organizations across sectors are struggling to keep pace with the speed at which employees are adopting AI tools — often without guidance, policy or guardrails.

When AI use outruns policy

The OPS case illustrates what happens when employees are enthusiastic about AI but their organization's governance framework hasn’t kept up. It’s likely that staff bypassed the approved tool because it was easier, more familiar, or more capable — in their perception — than the sanctioned alternative. The government set no targets for adoption of Microsoft Copilot Chat, shared no usage metrics with senior stakeholders, and took no action to understand or address its low uptake, said the report.

OPS staff also encountered an additional vulnerability: when accessing Microsoft Copilot Chat through non-default browsers such as Google Chrome or Mozilla Firefox, the tool's Enterprise Data Protection feature — designed to prevent sensitive data from being used to train external AI models — was automatically bypassed. The Ministry of Public and Business Service Delivery and Procurement – which is leading AI adoption in the OPS –didn’t restrict browser usage or communicated this risk through training.

The Auditor General's report benchmarked the OPS's AI strategy against other Canadian and international public-sector organizations and found it lacking in several key areas. It didn’t identify specific actionable items, had no clear plan to prioritize AI use across ministry areas and — critically — did not identify any prohibited AI practices or areas where the technology posed unacceptable risk.

Employers who lack oversight on their employees' AI use face similar strategic blind spots as HR leaders across North America have found: without formal AI policies, monitoring, and training, organizations are exposed to data liability, reputational risk, and loss of control over how their most sensitive information is being handled.

Procurement failures compound the risk

The audit's findings didn’t stop at employee behaviour. A review of the procurement process for AI Scribe systems — tools used by family doctors and other health-care professionals to transcribe patient notes — revealed troubling gaps in how vendors were evaluated.

Evaluators noted inaccuracies in the medical notes generated by most approved vendors, including incorrect information, AI hallucinations, and incomplete documentation. Eleven of the 20 approved vendors had not submitted any third-party audit reports, security organization controls certifications or international security standards documentation, yet were approved nonetheless. Five vendors had not submitted required threat risk assessments or privacy impact assessments.

Rapidly evolving AI landscape

The Ontario report arrives at a moment when Canadian employers are navigating a rapidly evolving AI landscape. Understanding why HR must be involved in AI strategy from the very start has become a defining leadership question — and the OPS audit makes the cost of delay vivid.

Auditor General Spence said that the Office will follow up on the implementation of the report's recommendations in two years, noting that the provincial government has accepted most of the report’s recommendations for secure AI practices.

For the public sector and private-sector leaders alike, the clock on the AI training gap is already running.