The biggest tech company on earth has a nascent labour movement on its hands – and it carries lessons for every people professional

Meta Platforms employees at multiple United States offices staged an extraordinary workplace protest on Tuesday, distributing anonymous flyers urging colleagues to sign a petition against the company's recent installation of mouse-tracking software on their work computers – a technology many staff believe is being used to train the very AI systems intended to replace them.

The pamphlets, photographed by Reuters, appeared in meeting rooms, atop vending machines, and balanced on toilet paper dispensers inside Facebook's parent company's offices. Their message was blunt: "Don't want to work at the Employee Data Extraction Factory?"

The action is not merely a Silicon Valley curiosity. It is a live case study in what happens when organisations deploy workforce surveillance without genuine employee consent, layered on top of deep anxiety about AI-driven redundancies – and it arrives at a moment when Australian HR leaders face precisely the same pressures.

What happened?

The protest comes roughly a week before Meta is expected to begin notifying the approximately 8,000 employees who will form its first wave of 10 per cent workforce reductions, a restructure previously covered by HRD in April.

Meta installed mouse-tracking software on employee computers earlier this year, capturing cursor movements, clicks, and navigation through dropdown menus. The company's rationale – offered by spokesperson Andy Stone – is straightforwardly commercial: "If we're building agents to help people complete everyday tasks using computers, our models need real examples of how people actually use them."

Employees, however, see something more troubling. The flyers and an associated online petition cite the US National Labor Relations Act, informing signatories that workers are legally protected when they organise to improve working conditions. The pamphlet distribution is the most visible sign yet of a nascent labour movement inside Meta, where staff have seethed for months on internal platforms and online forums over plans they describe as forcing them to help build their own replacements.

The UK front

Across the Atlantic, a group of Meta employees has launched a formal union drive in the United Kingdom with United Tech and Allied Workers (UTAW), a branch of the Communication Workers Union. The campaign website uses the URL "Leanin.uk" – a pointed reference to former chief operating officer Sheryl Sandberg's best-selling book encouraging women to seek equal footing in the workplace.

Eleanor Payne, an organiser with UTAW, was unsparing in her assessment. "Meta's workers are paying the price for management's reckless and expensive bets," she said. "While executives chase speculative AI strategies, staff are facing devastating job cuts, draconian surveillance, and the cruel reality of being forced to train the inefficient systems being positioned to replace them."

The Australian legal context

For HR leaders in Australia, Tuesday's events are not just a cautionary tale from afar – they are a preview.

Australian workplace surveillance law is a patchwork. As HRD has reported, compliance obligations span state and territory legislation – including Victoria's Surveillance Devices Workplace Privacy Act — as well as the federal Privacy Act. Tamsin Lawrence, associate director at Australian Business Lawyers & Advisors, has warned that employers using technology products developed in the United States may be unknowingly operating outside Australian legal norms, where surveillance laws differ significantly.

HRD's analysis of Australia's AI regulatory landscape found that most employees covered by modern awards or enterprise agreements are entitled to formal consultation before major technological change is introduced – a requirement that encompasses AI and automated decision-making. The question of whether deploying mouse-tracking software constitutes a "major change" triggering those obligations is live legal territory.

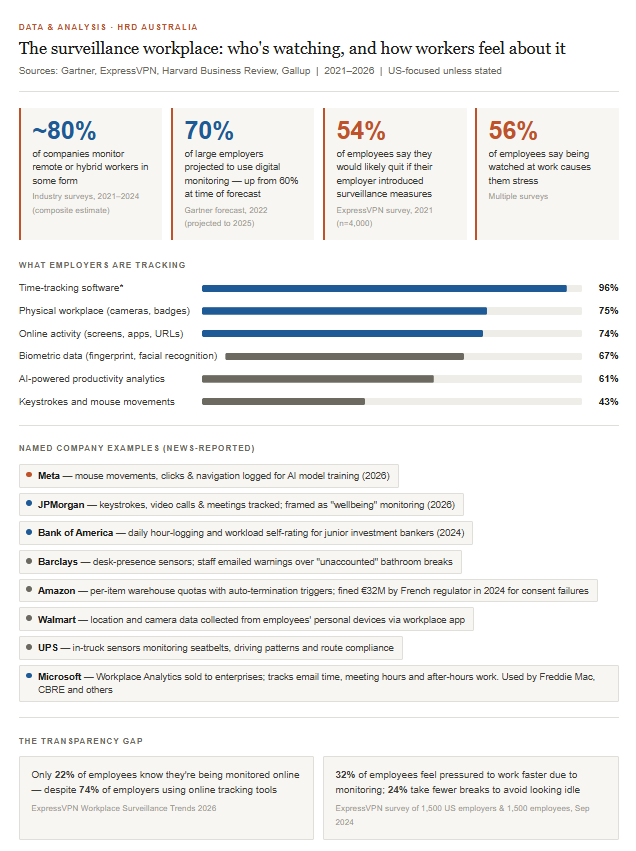

The consequences of getting it wrong go beyond legal exposure. As HRD's examination of workplace surveillance's human cost found, employees who believe monitoring is intrusive – and who are not given adequate information about how data will be used – show measurably lower trust in management, reduced organisational commitment, and higher rates of staff turnover.

The anxiety beneath the flyers

To understand the depth of anger at Meta, it is necessary to appreciate the context. HRD's examination of the AI layoff wave found that prediction markets now give 87 to 92% odds that 2026 will be the worst year for tech job cuts on record. At Meta specifically, a second round of reductions is planned for the second half of the year, with earlier Reuters reporting suggesting the total reduction could eventually reach 20%.

Into this environment, the company introduced technology that records how employees interact with their computers. Whether or not the business justification is legitimate – and Meta's argument that it needs human workflow data to train AI agents is coherent – the optics are catastrophic. Employees are being asked to generate the training data that will power the automation of their own roles, with no meaningful right to opt out.

The HR discipline has a name for the result: psychosocial harm. Employment lawyers have told HRD that stress claims are rising directly as a consequence of intensive monitoring, and that organisations have a duty of care obligation to manage that risk – even when the monitoring itself is technically lawful.

What this means for people professionals

The Meta situation compresses several urgent questions into a single, highly visible case:

Consent and transparency. Research summarised by HRD found that a significant proportion of employees are "unsure if their employers were conducting surveillance" – and that even when disclosure occurs, workers often do not receive enough detail about how their data will be used or what recourse they have. Meta's disclosure appears to have fallen into precisely this gap.

The "training your replacement" problem. What makes the Meta backlash particularly sharp is the perceived instrumental use of the data – not for performance management, but for AI model training. This framing transforms routine productivity monitoring into something workers experience as fundamentally adversarial.

The AI layoff ROI question. New Gartner research covered by HRD this week found that workforce reductions attributed to AI are not translating into meaningful return on investment for most organisations. The report found that firms with higher and lower AI ROI have nearly equal workforce reduction rates – suggesting the cuts are not the efficiency play executives are presenting them as.

Unionisation as a symptom. The UK union drive at Meta is a leading indicator, not an anomaly. Australia's own regulators and unions are already sounding the alarm. The Australian Services Union has warned that members in IT and administrative roles are already experiencing AI-driven productivity demands and the threat of digital surveillance. ACTU submissions to government have argued the window for sensible regulation is closing.

The bottom line

No HR leader watching Tuesday's events at Meta should conclude this is merely a problem for American tech giants. The convergence of mass redundancies, opaque AI surveillance, and a workforce that feels it is being asked to engineer its own obsolescence is not unique to Menlo Park.

The practical obligations are clear: consult before deploying monitoring technology, be explicit about what data is collected and why, ensure employees have meaningful recourse, and take seriously the psychosocial risk that intensive surveillance creates – particularly in an environment where job security is already precarious.

Meta's employees did not reach for flyers and federal labour law because the mouse-tracking software was technically impermissible. They reached for them because no one had made them feel that the organisation was on their side. That is, ultimately, a people management failure – and one that HR has both the standing and the responsibility to prevent.